If you have determined which resource will limit the speed of your program, you can go directly to the section that discusses appropriate techniques for minimizing the use of that resource. Otherwise, assume that the program will be balanced and that all of the recommendations in this chapter apply. Once the program is implemented, proceed to Identifying the Performance-Limiting Resource.

The maximum speed of a truly processor-limited program is determined by:

If the program is CPU-limited because it consists almost entirely of numerical computation, the chosen algorithm will have a major effect on the performance of the program. A discussion of alternative algorithms is beyond the scope of this book. It is assumed that computational efficiency has been considered in choosing the algorithm.

Given an algorithm, the only items in the preceding list that the programmer can affect are the source code, the compiler options used, and possibly the data structures. The following sections deal with techniques that can be used to improve the efficiency of an individual program for which the user has the source code. If the source code is not available, attempt to use tuning or workload-management techniques.

In Chapter 1. Performance Concepts, we indicated that processors have a multilevel hierarchy of memory:

As instructions and data move up the hierarchy, they move into storage that is faster than the level below it, but also smaller and more expensive. To obtain the maximum possible performance from a given machine, therefore, the programmer must make the most effective use of the available storage at each level.

Effective use of storage means keeping it full of instructions and data that are likely to be used. An obstacle to achieving this objective is the fact that storage is allocated in fixed-length blocks such as cache lines and real memory pages that usually do not correspond to boundaries within programs or data structures. Programs and data structures that are designed without regard to the storage hierarchy often make inefficient use of the storage allocated to them, with adverse performance effects in small or heavily loaded systems.

Taking the storage hierarchy into account means understanding and adapting to the general principles of efficient programming in a cached or virtual-memory environment. Repackaging techniques can yield significant improvements without recoding, and any new code should be designed with efficient storage use in mind.

Two terms are essential to any discussion of the efficient use of hierarchical storage: locality of reference and working set.

A program with good locality of reference has a minimal working set, because the blocks that are in use are tightly packed with executing code or data. A functionally equivalent program with poor locality of reference has a larger working set, because more blocks are needed to accommodate the wider range of addresses being accessed.

Because each block takes a significant amount of time to load into a given level of the hierarchy, the objective of efficient programming for a hierarchical-storage system is to design and package code in such a way that the working set remains as small as practical.

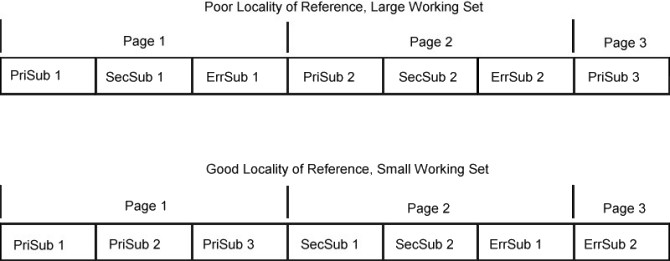

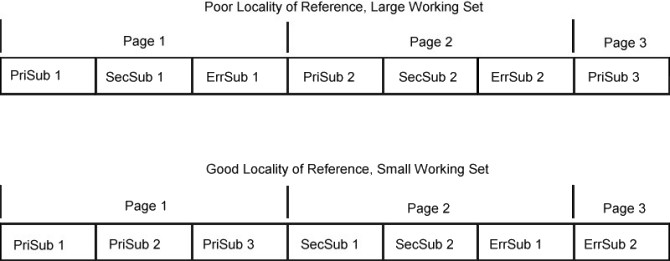

The following figure illustrates good and bad practice at a subroutine level. The first version of the program is packaged in the sequence in which it was probably written. The first subroutine PriSub1 contains the entry point of the program. It always uses primary subroutines PriSub2 and PriSub3. Some infrequently used functions of the program require secondary subroutines SecSub1 and SecSub2. On rare occasions, the error subroutines ErrSub1 and ErrSub2 are needed.

Figure 4-1. Locality of Reference. The top half of the figure describes how a binary program is packaged which shows low locality of reference. The instructions for PriSub1 is in the binary executable first, followed by the instructions for SecSub1, ErrSub1, PriSub2, SecSub2, ErrSub2, and PriSub3. In this executable, the instructions for PriSub1, SecSub1, and ErrSub1 occupy into the first page of memory. The instructions for PriSub2, SecSub2, and ErrSub2 occupy the second page of memory, and the instructions for PriSub3 occupy the third page of memory. SecSub1 and SecSub2 are infrequently used; also ErrSub1 and ErrSub2 are rarely used, if ever. Therefore, the packaging of this program exhibits poor locality of reference and may use more memory than required. In the second half of the figure, PriSub1, PriSub2, and PriSub3 are located next to each other and occupy the first page of memory. Following PriSub3 is SecSub1, SecSub2, and ErrSub1 which all occupy the second page of memory. Finally, ErrSub2 is at the end and occupies the third page of memory. Because ErrSub2 may never be needed, it would reduce the memory requirements by one page in this case.

|

The initial version of the program has poor locality of reference because it takes three pages of memory to run in the normal case. The secondary and error subroutines separate the main path of the program into three, physically distant sections.

The improved version of the program places the primary subroutines adjacent to one another and puts the low-frequency function after that. The necessary error subroutines (which are rarely-used) are left at the end of the executable program. The most common functions of the program can now be handled with only one disk read and one page of memory instead of the three previously required.

Remember that locality of reference and working set are defined with respect to time. If a program works in stages, each of which takes a significant time and uses a different set of subroutines, try to minimize the working set of each stage.

In general, allocating and optimizing of register space and keeping the pipeline full are the responsibilities of the compilers. The programmer's main obligation is to avoid structures that defeat compiler-optimization techniques. For example, if you use one of your subroutines in one of the critical loops of your program, it may be appropriate for the compiler to inline that subroutine to minimize execution time. If the subroutine has been packaged in a different .c module, however, it cannot be inlined by the compiler.

Depending on the processor architecture and model, processors have from one to several caches to hold the following:

If a cache miss occurs, loading a complete cache line can take dozens of processor cycles. If a TLB miss occurs, calculating the virtual-to-real mapping of a page can take several dozen cycles. The exact cost is implementation-dependent.

Even if a program and its data fit in the caches, the more lines or TLB entries used (that is, the lower the locality of reference), the more CPU cycles it takes to get everything loaded in. Unless the instructions and data are reused many times, the overhead of loading them is a significant fraction of total program execution time, resulting in degraded system performance.

Good programming techniques keep the main-line, typical-case flow of the program as compact as possible. The main procedure and all of the subroutines it calls frequently should be contiguous. Low-probability conditions, such as obscure errors, should be tested for only in the main line. If the condition actually occurs, its processing should take place in a separate subroutine. All such subroutines should be grouped together at the end of the module. This arrangement reduces the probability that low-usage code will take up space in a high-usage cache line. In large modules, some or all of the low-usage subroutines might occupy a page that almost never has to be read into memory.

The same principle applies to data structures, although it is sometimes necessary to change the code to compensate for the compiler's rules about data layout.

For example, some matrix operations, such as matrix multiplication, involve algorithms that, if coded simplistically, have poor locality of reference. Matrix operations generally involve accessing the matrix data sequentially, such as row elements acting on column elements. Each compiler has specific rules about the storage layout of matrixes. The FORTRAN compiler lays out matrixes in column-major format (that is, all of the elements of column 1, followed by all the elements of column 2, and so forth). The C compiler lays out matrixes in row-major format. If the matrixes are small, the row and column elements can be contained in the data cache, and the processor and floating-point unit can run at full speed. However, as the size of the matrixes increases, the locality of reference of such row/column operations deteriorates to a point where the data can no longer be maintained in the cache. In fact, the natural access pattern of the row/column operations generates a thrashing pattern for the cache where a string of elements accessed is larger than the cache, forcing the initially accessed elements out and then repeating the access pattern again for the same data.

The general solution to such matrix access patterns is to partition the operation into blocks, so that multiple operations on the same elements can be performed while they remain in the cache. This general technique is given the name strip mining.

Experts in numerical analysis were asked to code versions of the matrix-manipulation algorithms that made use of strip mining and other optimization techniques. The result was a 30-fold improvement in matrix-multiplication performance. The tuned routines are in the Basic Linear Algebra Subroutines (BLAS) library, /usr/lib/libblas.a. A larger set of performance-tuned subroutines is the Engineering and Scientific Subroutine Library (ESSL) licensed program.

The functions and interfaces of the Basic Linear Algebra Subroutines are documented in AIX 5L Version 5.1 Technical Reference. The FORTRAN run-time environment must be installed to use the library. Users should generally use this library for their matrix and vector operations because its subroutines are tuned to a degree that users are unlikely to achieve by themselves.

If the data structures are controlled by the programmer, other efficiencies are possible. The general principle is to pack frequently used data together whenever possible. If a structure contains frequently accessed control information and occasionally accessed detailed data, make sure that the control information is allocated in consecutive bytes. This will increase the probability that all of the control information will be loaded into the cache with a single (or at least with the minimum number of) cache misses.

The programmer who wants to obtain the highest possible performance from a given program running on a given machine must deal with several considerations:

Programmers who are unable to experiment, should always optimize. The difference in performance between optimized and unoptimized code is almost always so large that basic optimization (the -O option of the compiler commands) should always be used. The only exceptions are testing situations in which there is a specific need for straightforward code generation, such as statement-level performance analysis using the tprof tool.

These techniques yield additional performance improvement for some programs, but the determination of which combination yields the best performance for a specific program might require considerable recompilation and measurement.

For an extensive discussion of the techniques for efficient use of compilers, see Optimization and Tuning Guide for XL Fortran, XL C and XL C++.

The levels of optimization in the compilers are as follows:

In the absence of any version of the -O flag, the compiler generates straightforward code with no instruction reordering or other attempt at performance improvement.

These equivalent flags cause the compiler to optimize on the basis of conservative assumptions about code reordering. Only explicit relaxations such as the #pragma directives are used. This level performs no software pipelining, loop unrolling, or simple predictive commoning. It also constrains the amount of memory the compiler can use.

This flag directs the compiler to be aggressive about the optimization techniques used and to use as much memory as necessary for maximum optimization.

This level of optimization may result in functional changes to the program if the program is sensitive to the following:

These side effects can be avoided, at some performance cost, by using the -qstrict option in combination with -O3.

The -qhot option, in combination with -O3, enables predictive commoning and some unrolling.

The result of these changes is that large or complex routines should have the same or better performance with the -O3 option (possibly in conjunction with -qstrict or -qhot) that they had with the -O option in earlier versions of the compiler.

Systems can use several type of processors. By using the -qarch and -qtune options, you can optimize programs for the special instructions and particular strengths of these processors.

Follow these guidelines:

The operating system provides the ability to embed the string subroutines in the application program rather than using them from libc.a, saving call and return linkage time. To embed the string subroutines, the source code of the application must have the following statement prior to the use of the subroutine(s):

#include <string.h>

In many cases, the performance cost of a C construct is not obvious, and sometimes is even counter-intuitive. Some of these situations are as follows:

In most cases, char and short data items take more instructions to manipulate. The extra instructions cost time, and, except in large arrays, any space that is saved by using the smaller data types is more than offset by the increased size of the executable program.

A signed char takes another two instructions more than an unsigned char each time the variable is loaded into a register.

Global variables require more instructions to access than local variables. Also, in the absence of information to the contrary, the compiler assumes that any global variable may have been changed by a subroutine call. This change has an adverse effect on optimization because the value of any global variable used after a subroutine call will have to be reloaded.

Unless the global variable is accessed only once, it is more efficient to use the local copy.

#define situation_1 1 #define situation_2 2 #define situation_3 3 int situation_val; situation_val = situation_2; . . . if (situation_val == situation_1) . . .

is much more efficient than the following sequence:

char situation_val[20]; strcpy(situation_val,"situation_2"); . . . if ((strcmp(situation_val,"situation_1"))==0) . . .

The mem*() family of routines, such as memcpy(), is faster than the corresponding str*() routines, such as strcpy(), because the str*() routines must check each byte for null and the mem*() routines do not.

In the operating system, the C compiler can be invoked by two different commands: cc and xlc. The cc command, which has historically been used to invoke the system's C compiler, causes the C compiler to run in langlevel=extended mode. This mode allows the compilation of existing C programs that are not ANSI-compliant. It also consumes processor time.

If the program being compiled is, in fact, ANSI-compliant, it is more efficient to invoke the C compiler by using the xlc command.

Use of the -O3 flag implicitly includes the -qmaxmem option. This option allows the compiler to use as much memory as necessary for maximum optimization. This situation can have two effects:

To programmers accustomed to struggling with the addressing limitations of, for instance, the DOS environment, 256 MB virtual memory segments seem effectively infinite. The programmer is tempted to ignore storage constraints and code for minimum path length and maximum simplicity. Unfortunately, there is a drawback to this attitude. Virtual memory is large, but it is variable-speed. The more memory used, the slower it becomes, and the relationship is not linear. As long as the total amount of virtual storage actually being touched by all programs (that is, the sum of the working sets) is slightly less than the amount of unpinned real memory in the machine, virtual memory performs at about the speed of real memory. As the sum of the working sets of all executing programs passes the number of available page frames, memory performance degrades rapidly (if VMM memory load control is turned off) by up to two orders of magnitude. When the system reaches this point, it is said to be thrashing. It is spending almost all of its time paging, and no useful work is being done because each process is trying to steal back from other processes the storage necessary to accommodate its working set. If VMM memory load control is active, it can avoid this self-perpetuating thrashing, but at the cost of significantly increased response times.

The degradation caused by inefficient use of memory is much greater than that from inefficient use of the caches because the difference in speed between memory and disk is so much higher than the difference between cache and memory. Where a cache miss can take a few dozen CPU cycles, a page fault typically takes 10 milliseconds or more, which is at least 400,000 CPU cycles.

Although VMM memory load control can ensure that incipient thrashing situations do not become self-perpetuating, unnecessary page faults still exact a cost in degraded response time and reduced throughput (see Tuning VMM Memory Load Control with the schedtune Command).

To minimize the code working set of a program, the general objective is to pack code that is frequently executed into a small area, separating it from infrequently executed code. Specifically:

To minimize the data working set, try to concentrate the frequently used data and avoid unnecessary references to virtual-storage pages. Specifically:

To avoid circularities and time-outs, a small fraction of the system must be pinned in real memory. For this code and data, the concept of working set is meaningless, because all of the pinned information is in real storage all the time, whether or not it is used. Any program (such as a user-written device driver) that pins code or data must be carefully designed (or scrutinized, if ported) to ensure that only minimal amounts of pinned storage are used. Some cautionary examples are as follows: